- #How to add a network datastore to vmware esxi 6 cli update

- #How to add a network datastore to vmware esxi 6 cli driver

- #How to add a network datastore to vmware esxi 6 cli upgrade

This is not a particularly good option as one must do this for every new volume, which can make it easy to forget, and must do it on every host for every volume. This can be set on a per-device basis and as every new volume is added, these options can be set against that volume.

#How to add a network datastore to vmware esxi 6 cli update

If you are running earlier than ESXi 6.0 Express Patch 5 or 6.5 Update 1, there are a variety of ways to configure Round Robin and the I/O Operations Limit. VMW_SATP_ALUA PURE FlashArray system VMW_PSP_RR iops=1įor information, refer to this blog post:Ĭonfiguring Round Robin and the I/O Operations Limit

#How to add a network datastore to vmware esxi 6 cli driver

Name Device Vendor Model Driver Transport Options Rule Group Claim Options Default PSP PSP Options Description

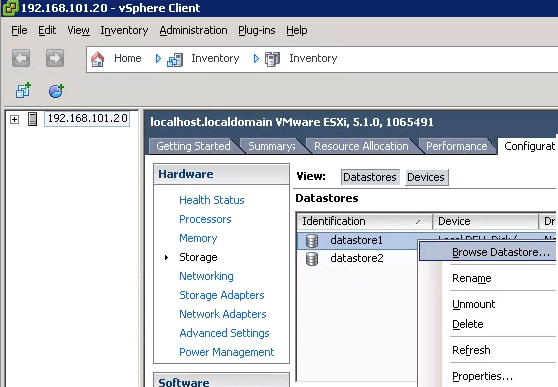

Inside of ESXi you will see a new system rule:

Starting with ESXi 6.0 Express Patch 5 (build 5572656) and later (Release notes) and ESXi 6.5 Update 1 (build 5969303) and later (release notes), Round Robin and an I/O Operations limit is the default configuration for all Pure Storage FlashArray devices (iSCSI and Fibre Channel) and no configuration is required.Ī new default SATP rule, provided by VMware by default was specifically built for the FlashArray to Pure Storage’s best practices. For additional information you can read the VMware KB regarding setting the IOPs Limit.ĮSXi Express Patch 5 or 6.5 Update 1 and later This gives Pure Storage and the end user confidence that all hosts are properly using all available front-end ports.įor these three above reasons, Pure Storage highly recommends altering the I/O Operations Limit to 1. When the I/O Operations Limit is set to 1, this imbalance is less than 1%. When Round Robin is enabled with the default I/O Operations Limit, port imbalance is improved to about 20-30% difference. This then means additional troubleshooting to make sure that host can survive a controller reboot. When volumes are configured to use Most Recently Used, an imbalance of 100% is usually observed (ESXi tends to select paths that lead to the same front end port for all devices). One method that is used to confirm this is to check the I/O balance from each host across both controllers. Because of this, we want to ensure that all hosts are actively using both controllers prior to upgrade. Due to the reboots, twice during the process half of the FlashArray front-end ports go away.

#How to add a network datastore to vmware esxi 6 cli upgrade

When Purity is upgraded on a FlashArray, the following process is observed (at a high level): upgrade Purity on one controller, reboot it, wait for it to come back up, upgrade Purity on the other controller, reboot it and you’re done. This greatly reduces the impact of a physical failure in the storage environment and provides greater performance resiliency and reliability. When this value is set to the minimum of 1, path failover generally decreases to sub-ten seconds. When the I/O Operations Limit is set to the default of 1,000 path failover time can sometimes be in the 10s of seconds which can lead to noticeable disruption in performance during this failure. This failure does not always happen immediately. During a physical failure of the storage environment (loss of a HBA, switch, cable, port, controller) ESXi, after a certain period of time, will fail any logical path that relies on that failed physical hardware and will discontinue attempting to use it for a given volume. It has been noted in testing that ESXi will fail logical paths much more quickly when this value is set to a the minimum of 1. Regardless, changing this value can improve performance in some use cases, especially with iSCSI. While changing this value from 1,000 to 1 can improve performance, it generally will not solve a major performance problem. While this is true in certain cases, the performance impact of changing this value is not usually profound (generally in the single digits of a percentage performance increase). Often the reason cited to change this value is performance. This recommendation is made for a few reasons: This will cause ESXi to change logical paths after every single I/O, instead of 1,000. Pure Storage recommends tuning this value down to the minimum of 1. Once it has issued 1,000 I/Os for that device, down that path, it will switch to a new logical path and so on. In other words, ESXi will choose a logical path, and start issuing all I/Os for that device down that path. By default, when Round Robin is enabled on a device, ESXi will switch to a new logical path every 1,000 I/Os. The I/O Operations Limit (sometimes called the “IOPS” value) dictates how often ESXi switches logical paths for a given device. The Round Robin Path Selection Policy allows for additional tuning of its path-switching behavior in the form of a setting called the I/O Operations Limit.